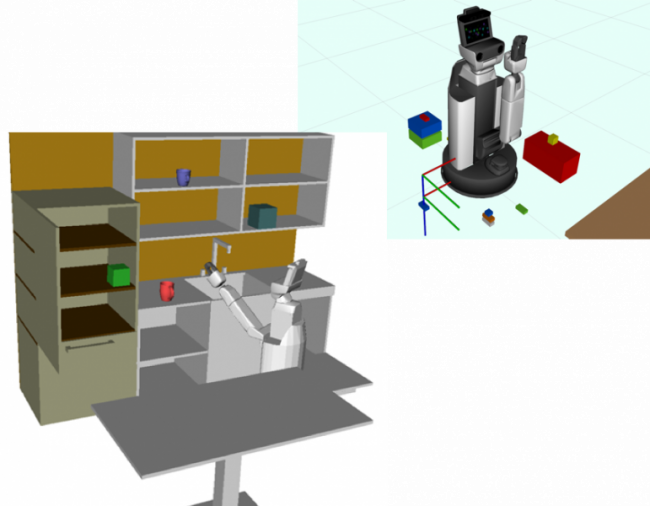

The last few years has seen the prolific development of robotic systems resulting in a variety of well-performing component technologies: image recognition, grasp detection, motion planning, and more. Despite this, few modern robotic systems have been successfully deployed to work alongside humans, making the TRI HSR University Challenge (i.e., cleaning up a play area) an invaluable benchmark. Component technologies alone are not sufficient – successful robotic platforms need to engage with humans and the environment at a high level. Modern systems will need to generate coordinated task-motion plans that can react swiftly to environmental or user goal changes. These plans will need to be built through a natural dialog with humans – discussing plan goals and limiting constraints. For example, a user should be able to ask HSR to “clean up the play area” (a goal), “sort the legos by color” (a semantic constraint), “don’t get too close to the china cabinet” (minimize risk), and “finish before the baby starts taking a nap” (a temporal constraint).

There is a need for a planning and control framework that (1) creates and refines high-level goals by interacting naturally with humans, (2) generates task-level plans with motion-level guarantees, (3) accounts for risk during planning and execution, and (4) reacts nearly instantaneously to changes in the environment. More specifically, users should be able to naturally dialog with the system: defining high-level goals, discussing limiting constraints, and adjusting plan details to suit their preferences. The planning framework should generate a risk-aware task policy with corresponding control policies and execution guarantees. Embedded within the task-motion policy should be a set of contingency plans enabling the system to react on-the-fly to new goals or observations of the user or environment.

[Start Date: December 2018]

Publications:

-

S. Dai, S. Schaffert, A. Jasour, A. Hofmann, and B. Williams, “Chance Constrained Motion Planning for High-Dimensional Robots,” in 2019 International Conference on Robotics and Automation (ICRA), Montreal, QC, Canada, 2019, pp. 8805–8811 [Online]. Available: https://doi.org/10.1109/ICRA.2019.8793660. [Accessed: 30-Oct-2019]

-

M. Orton, S. Dai, S. Schaffert, A. Hofmann, and B. Williams, “Improving Incremental Planning Performance through Overlapping Replanning and Execution,” in 2019 International Conference on Robotics and Automation (ICRA), Montreal, QC, Canada, 2019, pp. 2426–2432 [Online]. Available: https://doi.org/10.1109/ICRA.2019.8793642. [Accessed: 16-Sep-2019]

-

N. Bhargava and B. C. Williams, “Faster Dynamic Controllability Checking in Temporal Networks with Integer Bounds,” in Proceedings of the Twenty-Eighth International Joint Conference on Artificial Intelligence, Macao, China, 2019, pp. 5509–5515 [Online]. Available: https://www.ijcai.org/proceedings/2019/765. [Accessed: 10-Oct-2019]